sfReapportion package, which performs areal-weighted interpolation on spatial objects such as census tracts and voting districts.

A colleague of mine recently shared some code for a research project on the upcoming municipal elections in France, but the code required the spReapportion package, which has been hard to install and use for a few years, due to some of its dependencies, maptools and rgeos, having been retired in favour of the sf package.

The spReapportion package, which performs areal-weighted interpolation, was coded by a friend of mine. I decided to port his package in order for it to lose its retired dependencies, and to have it accept sf objects as well as sp ones. The result is available on GitHub as the sfReapportion package.

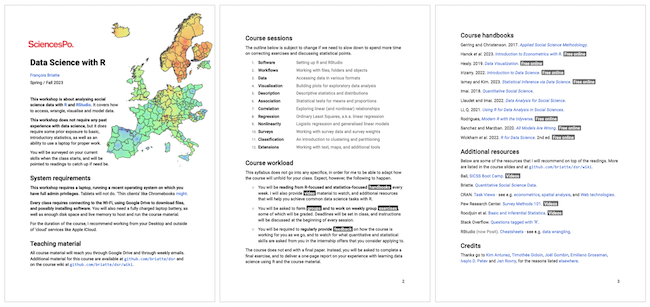

In parallel, I rewrote my other colleague's code in order to use that new package and to perform several other improvements. The maps shown below come from early results obtained with that code, which is also on GitHub.

Rationale

In France as in many if not most other countries, the census tracts, which are called IRIS, are spatially incongruous with voting districts. If one wants to use data collected at the tract-level with voting data collected at the district-level, then one first has to interpolate/reapportion that data to the spatial boundaries of voting districts.

The two maps below show the polling stations (or bureaux de vote, in French) of the city of Lille, the boundaries of which have been stable for several years. Each map shows the results of a distinct principal components analysis, followed by a hierarchal clustering of its principal components.

The map on the left is the interesting one. The data used for the underlying principal components analysis come from the French official statistics agency, Insee, which publishes that data at the tract-level. The data were reapportioned with sfReapportion in order to coincide with the boundaries of the voting districts.

Limitations

The sfReapportion package has only been lightly tested, especially when it comes to its weighted functions. However, the main function, which uses unweighted population counts, has been thoroughly tested, and its results have been reproduced with the areal package.

The sfReapportion package only performs extensive areal-weighted interpolation: for intensive or multiple (mixed) interpolation, users should turn to the areal package. Additional methods are also available from the populR package.

I do not plan to update the sfReapportion package much, as it was coded for reproducibility purposes, but users might open issues on its GitHub repository in order to ask questions or suggest improvements.